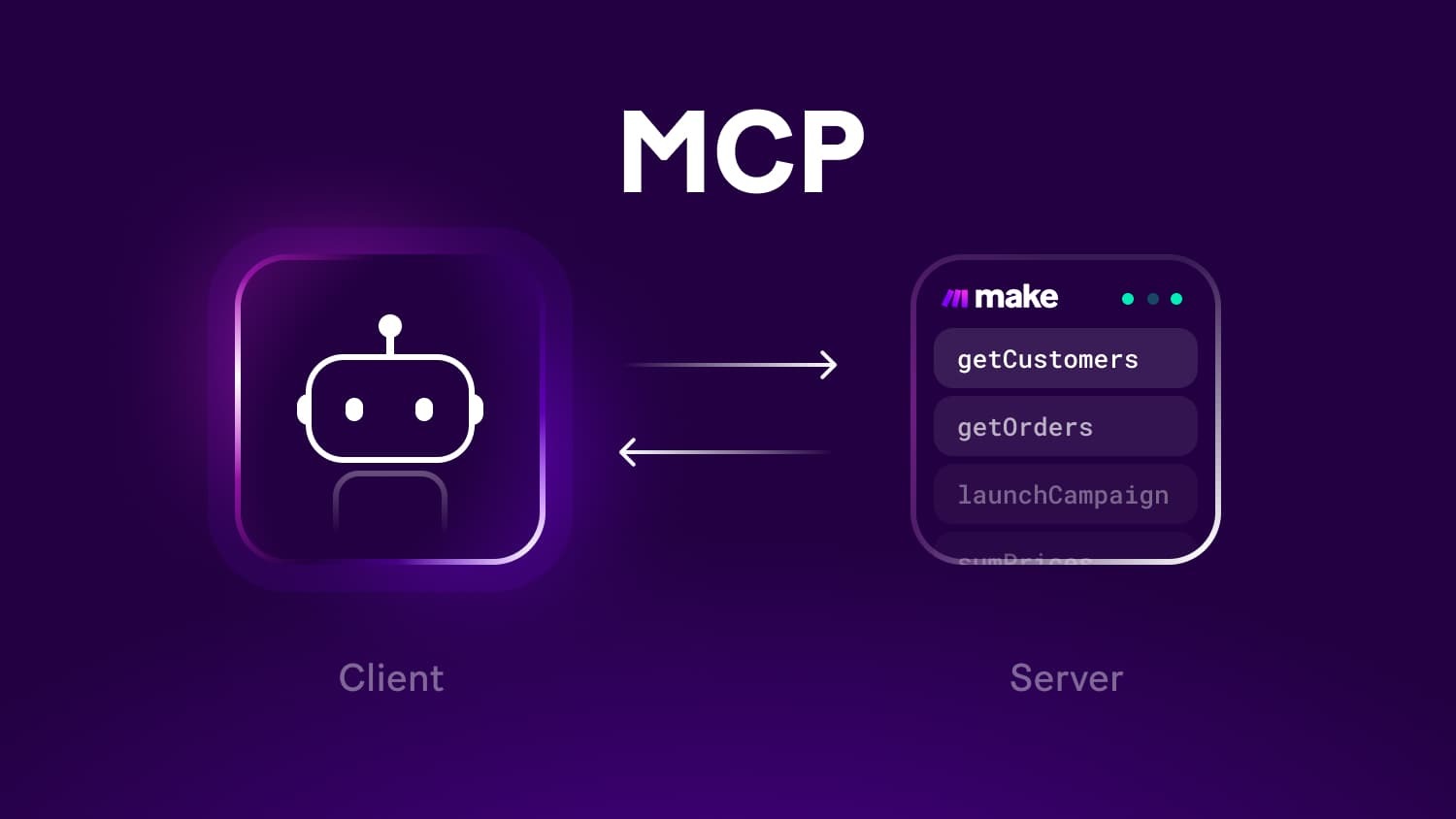

Model Context Protocol has shifted from an experimental bridge into a practical backbone for multimodal and multi-model apps. At its core, MCP lets you run “servers” that expose tools, data sources, and streaming events to clients like Claude and ChatGPT.

Instead of wiring brittle APIs for every model, teams register capabilities once and allow any MCP-aware client to discover, call, and observe them.

That unblocks rapid prototyping, shared governance, and consistent telemetry across the stack. In this article, you will find a clear picture of how MCP evolved, what it looks like in production, and why its design compares favorably to one-off integrations for real, maintainable AI systems.

What MCP actually standardizes

Successful MCP deployments standardize three things: capability discovery, tool invocation, and stateful sessions. Discovery defines how clients learn what a server can do, including input schemas and permission scopes.

Invocation describes how requests and responses flow, including streaming tokens and tool results. Sessions keep context alive across turns so tools can cache, throttle, and trace work. When those parts are consistent, product teams reduce glue code and centralize observability.

The result is a stable spine that can serve multiple user interfaces, from internal chat surfaces to agentic workflows, while keeping credentials, limits, and audit events in one place.

Where innovation is happening right now

Modern teams push MCP forward in materials, not just mechanics. Schema rigor, typed errors, and event channels are improving reliability, while sandboxed runners limit blast radius for untrusted tools.

You can also see progress in developer ergonomics: server templates, policy bundles, and test harnesses shorten the path from idea to production. For a deeper dive into practitioner-led advances and playbooks, many teams document innovation in MCP with examples that show how to expose search, retrieval, billing, and automation to multiple LLMs without duplicating work.

The common thread is a preference for declarative contracts so clients can negotiate capabilities instead of hardcoding them.

Highlights

- Typed schemas reduce mismatches between tools and clients

- Event streams enable incremental UX and timeouts

- Policy modules make permissions explicit and reviewable

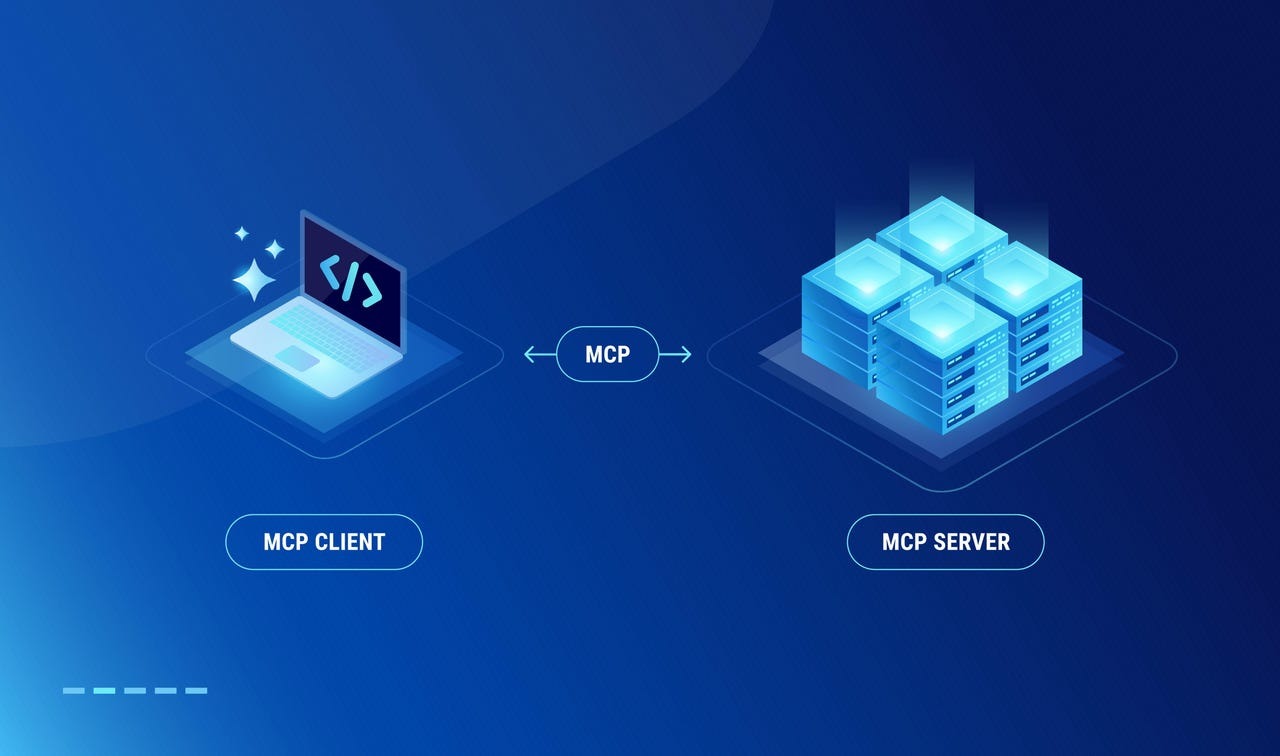

Architecture at a glance

A robust MCP rollout usually follows a simple structure that scales with you.

- MCP client sits inside your assistant or agent. It discovers servers, lists tools, and calls them with validated inputs.

- MCP servers each encapsulate a domain: search, documents, finance, code execution, or scheduling. They own credentials and rate limits.

- Policy and identity decide who can call what, with scopes attached to tools and resources.

- Observability captures spans, cost, and safety events so you can debug and optimize sessions.

- Fallbacks select alternate tools or models when latency or quotas bite, then record the choice for later analysis.

This separation of concerns keeps your surface simple, while letting platform engineers evolve capabilities without breaking end-user flows.

Development paths compared

Below is a practical comparison that teams use when deciding how to expose capabilities to LLMs.

| Criterion | Ad-hoc API calls from app code | Plugins tied to one model | MCP servers with shared policy |

| Time to first result | Fast for one UI | Moderate | Fast with templates |

| Cross-client reuse | Low | Low to medium | High |

| Policy and auditing | Scattered | Model specific | Centralized |

| Cost controls | In app logic | Varies by vendor | Uniform budgets per tool |

| Long-term maintenance | High | Medium to high | Low to medium |

Takeaway: MCP reduces duplication and centralizes policy. The upside increases with the number of interfaces, tools, and models you support.

Building an MCP server the right way

Before we go into implementation details, it helps to frame the goal. A good server is boring in the best sense: predictable, typed, observable, and easy to rotate. Treat it as a product with versioning and clear change logs rather than a script that only one developer understands.

Protocol and schema choices

Start with a stable transport and explicit JSON schemas. Define each tool with inputs, outputs, and known error families. Include pagination and partial results for anything that might stream. Add correlation IDs and a request budget so the client can negotiate time and cost.

Put version numbers on every contract, then publish examples and fixtures that your CI uses to validate changes. With clear schemas, your client can auto-generate validators and type hints, which cuts bugs in half and shortens onboarding for new contributors.

Auth, policy, and least privilege

Keep secrets and scopes at the server boundary. Issue short-lived tokens to the client and map them to policies that live alongside the tool definitions. Express limits in human language as well as code so product and security teams can review them together. Require explicit grants for high-risk tools such as file writes, code execution, and payment actions.

Finally, log every call with inputs, outputs, and redaction rules so you can investigate incidents without exposing user data.

Performance, cost, and reliability

You can treat an MCP server like a mini service mesh for tools. Set budgets per request and per session, then propagate those to downstream systems. Use circuit breakers and exponential backoff for flaky dependencies.

Cache immutable resources by key and content hash. For cost, record token usage when tools cause model calls, and surface per-tool cost dashboards to product owners. Reliability climbs when timeouts, retries, and fallbacks are consistent across servers rather than re-implemented in each client.

Subnote: Teams often cut monthly spend by double digits when they move prompt-level retrieval into MCP tools with shared caching, instead of repeating the same fetch in multiple assistants.

Security and governance best practices

Security improvements land fastest when they ride with developer experience.

- Establish a trust catalog of tools, each with scope, data classification, and owner.

- Run dynamic tests that fuzz inputs and verify redactions in logs.

- Separate signing keys for staging and production.

- Ship a policy preview endpoint so reviewers can simulate who can call which tools.

- Track PII and secrets as first-class types, not strings, which prevents accidental echo.

These habits turn compliance from a quarterly scramble into a daily guardrail that developers appreciate.

Conclusion

MCP has matured into a practical, auditable way to connect modern assistants to the real work your business depends on.

By standardizing discovery, invocation, and sessions, it removes glue code, centralizes governance, and opens the door to multi-model experiences that feel consistent across surfaces.

The most successful teams treat MCP servers as stable products with typed contracts, strong policy, clean observability, and measured benchmarks.

If you take that path, innovation becomes routine, comparisons favor your platform, and your assistants grow from prototypes into trustworthy tools that people rely on every day.